This article is in The Spectator’s March 2020 US edition. Subscribe here.

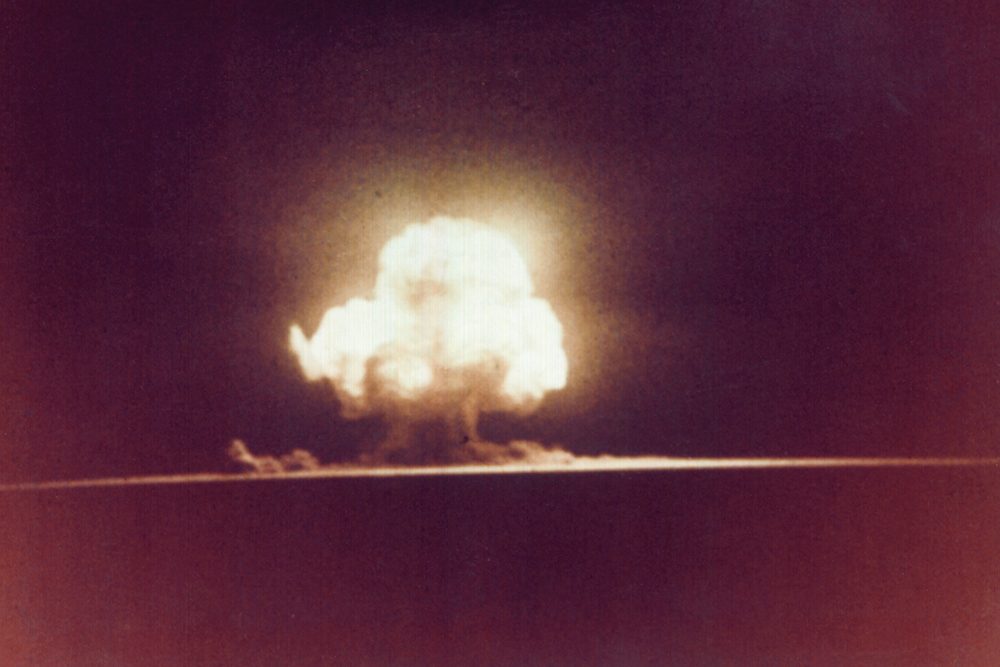

Humanity has come startlingly close to destroying itself in the 75 or so years in which it has had the technological power to do so. Some of the stories are less well known than others. One, buried in Appendix D of Toby Ord’s splendid The Precipice, I had not heard, despite having written a book on a similar topic myself. During the Cuban Missile Crisis, a USAF captain in Okinawa received orders to launch nuclear missiles; he refused to do so, reasoning that the move to DEFCON 1, a war state, would have arrived first.

Not only that: he sent two men down the corridor to the next launch control centre with orders to shoot the lieutenant in charge there if he moved to launch without confirmation. If he had not, I probably would not be writing this — unless with a charred stick on a rock.

It is one of several such stories, most nuclear-related but some not (for example, a top-security British lab accidentally releasing foot-and-mouth virus via a leaky pipe and causing a second outbreak, showing the limits of our biosecurity measures), illustrating how easily we could have dealt ourselves some unrecoverable blow. Some are truly chilling. But Ord’s point is that although we have come through the most dangerous time for humanity so far, greater dangers probably lie in the future.

The author, an Oxford philosopher, is concerned with existential risks: risks not just that some large percentage of us will die, but that we all will — or at least that our future will be irrevocably lessened. He points out that although the difference between a disaster that kills 99 percent of us and one that kills 100 percent would be numerically small, the outcome of the latter scenario would be vastly worse, because it shuts down humanity’s future. We could live for a billion years on this planet, or billions more on millions of other planets, if we manage to avoid blowing ourselves up in the next century or so.

Perhaps surprisingly, he doesn’t think that nuclear war would have been an existential catastrophe. It might have been — a nuclear winter could have led to sufficiently dreadful collapse in agriculture to kill everyone — but it seems unlikely, given our understanding of physics and biology. He also says that climate change, although it may well lead to some truly dreadful outcomes (he uses climate model forecasts to sketch them out), is unlikely to render the planet uninhabitable.

He looks at other risks — asteroids and comets hitting the Earth, or supernovae in our stellar neighborhood scouring complex life— but points out that if there was even a 1 percent per-century risk of any of those happening, then it would be vanishingly unlikely that our species would have survived the 2,000 centuries that it has.

But in the near future, our ever-increasing ability to alter DNA could make the creation of genetically engineered pandemics achievable by gifted undergraduate students, and, therefore, hugely increase the risk of accidental or deliberate release. He adds, alarmingly, that the Biological Weapons Convention, the global body founded to reduce such risks, has an annual budget smaller than that of the average McDonald’s restaurant.

He also notes that we may be close — a century or less — to the creation of true artificial intelligence. His concern is not that such agents become conscious or rebel against their masters, but that they become amazingly competent, and that they will go wrong in ways analogous to the ways computers go wrong now — finding ‘solutions’ to problems we set them that fulfill the letter of the instructions but not the spirit — but which, because the AIs are so much more powerful, are lethal. As he says, this is not sci-fi: surveys of researchers now suggest that they think there is about a one in 20 chance of AI causing human extinction.

It’s not a gloomy book. Ord doesn’t take the easy route of bemoaning the human race as despoilers or vandals; he loves it and wants it to thrive. We have achieved so much — come to understand the universe, reduced poverty and illnesses — and he wants us to carry on doing so. The final section on our potential, should we survive the ‘precipice’ of the next century or two, is moving and poetic: whether we slip the surly bonds of Earth or simply live in harmony with it for a billion years, there is a lot of humanity still to come. And he is ‘cautiously’ optimistic that we shall survive, although he estimates about a one in six chance that we won’t: Russian roulette.

The challenges are obvious. We can’t learn via trial and error how to avoid risks that by definition can only happen once; and it’s hard to coordinate billions of people in hundreds of nations into doing things that will cost them directly and benefit them only indirectly. Ord’s prescriptions for how to meet those challenges are at times (perhaps unavoidably) woolly; he talks often about prudence and caution, but exactly how this translates to global action is unclear.

Still, The Precipice is a powerful book, written with a philosopher’s eye for counterarguments so that he can meet them in advance. And Ord’s love for humanity and hope for its future is infectious, as is his horrified wonder at how close we have come to destroying it.

This article is in The Spectator’s March 2020 US edition. Subscribe here.